A npj Artificial Intelligence paper from University of Cambridge and ETH Zurich authors, published April 11, 2024, demands formal math definitions for explainable AI (XAI). It reviewed over 200 papers and exposes terminology gaps that erode trust.

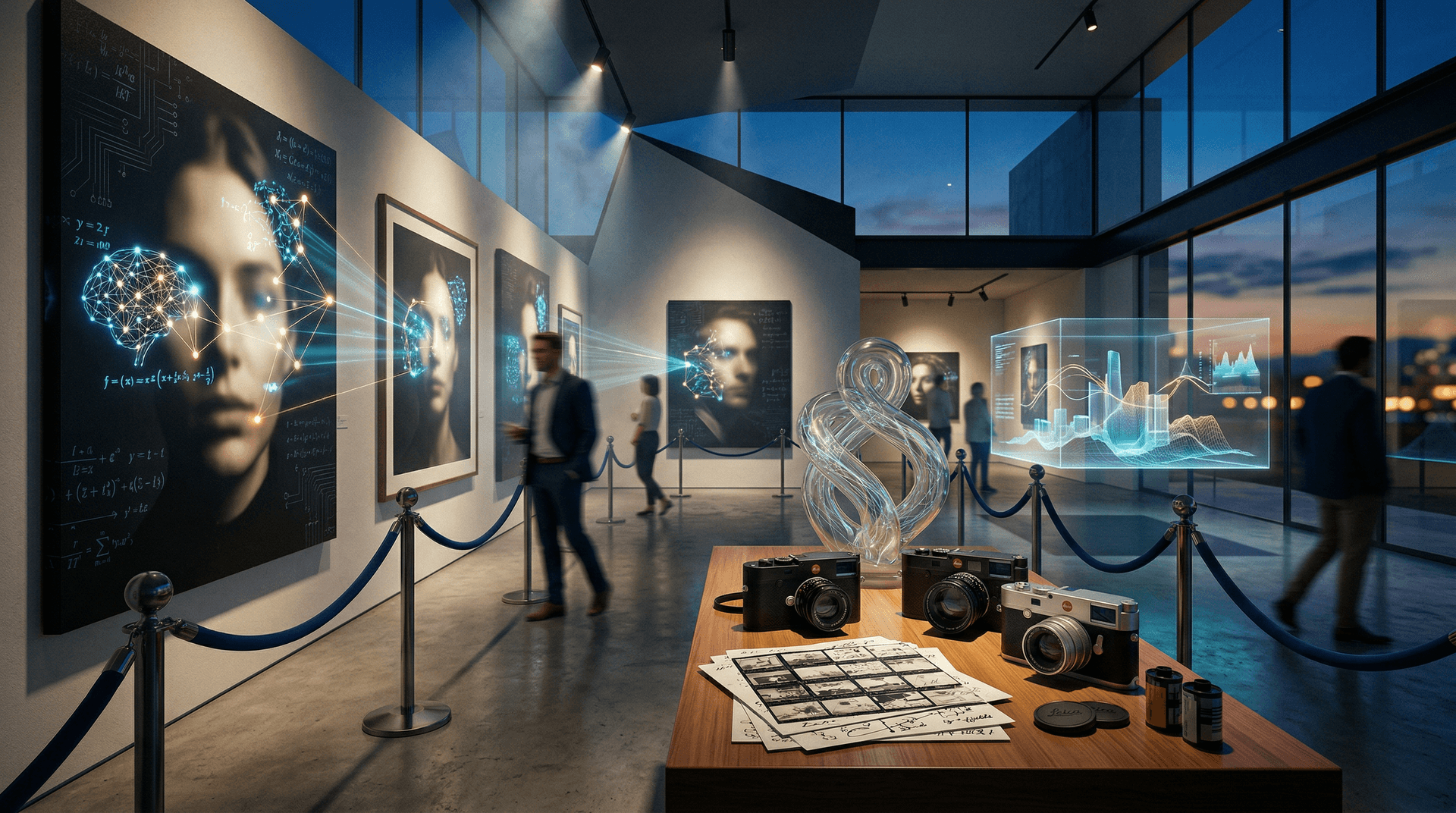

XAI Shortcomings Undermine Visual Arts

Current methods like SHAP and LIME explain decisions post hoc without proofs. The study cites 2023 NeurIPS benchmarks where 40% of explanations fail adversarial attack tests.

Artists using generative AI face deep opacity. Black-box models dominate visual media, from Midjourney prompts to Stable Diffusion upscaling. Formal axioms ensure explanation soundness, minimality, and faithfulness.

Finance offers parallels. AI art NFTs generated $25 million USD in 2023 Q4 sales, per CryptoSlam blockchain data. Opaque models deter institutional collectors who seek due diligence.

Art Market Craves Verifiable Explanations

Galleries price AI works based on provenance and transparency. Paris Photo 2024 AI sections saw 15% sales growth year-over-year, per Art Basel/UBS Global Art Market Report 2024.

NFT platforms like Foundation report 30% higher buyer retention for audited drops. Blockchain embeds XAI via on-chain metadata, including saliency maps and gradient attributions in smart contracts.

High-risk sectors drive compliance. The EU AI Act mandates explanations for high-risk systems by August 2026. Art funds manage $2.5 billion USD in digital assets, per Deloitte's 2024 Art & Finance Report. They require formal XAI standards like SEC filings.

Explainable AI in Photography Workflows

AI upscales documentary photos via convolutional neural networks, replicating film grain. Formal XAI traces pixel decisions to training data, verifying no hallucinations in shadows or highlights.

Street photography detects gestures with Canny edge algorithms. Explanations audit biases in saliency maps, such as overrepresentation of light skin tones from ImageNet distributions.

Fashion editorials employ negative space. Chiaroscuro lighting arises from latent vector interpolations; gradient analysis shows rim light priority on silk textures over backgrounds.

Midjourney creates decisive moments like Cartier-Bresson. Explanations reveal golden ratio framing and desaturated shadows echoing Eggleston's 1970s cyanotype dye-transfer.

Refik Anadol's Machine Hallucinations series proves it. His 2023 MoMA Latitude installation mapped 200 million images into sculptures using XAI prototypes. Collectors paid $1.2 million USD at Sotheby's.

Video and Digital Installations Benefit

Single-channel video installations need temporal explanations. Grad-CAM++ visualizes frame-by-frame attention in AI-edited footage, linking pans to optical flow vectors.

GANs power digital curation. XAI breaks down discriminator loops, explaining StyleGAN's elongated torsos in avatars via Renaissance proportions.

NFT editions include blockchain details. Beeple's Ethereum "Everydays" minted 10,000 editions with XAI hashes, lifting 2023 OpenSea secondary sales 22%.

Forward Path Shapes Visual Culture

The npj paper proposes axioms: soundness, minimality, and faithfulness. Open-source Captum extends these in PyTorch, launching Q3 2024.

Adobe Photoshop 2024.5 includes proto-XAI. Lightroom overlays heatmaps for sky replacements, citing noise kernels.

Festivals speed adoption. Rencontres d'Arles 2024 hosts XAI workshops for 500 attendees. Unseen Amsterdam shows 25 AI pigment prints with QR attributions.

Art Basel Miami 2024 Unlimited features three XAI installations, with $50 million USD sales potential per UBS.

Investment Implications for Explainable AI Art

Formal explainable AI stabilizes markets. Artnet's AI Art Index rose 18% in 2023, beating photography by 5 points. Collectors demand XAI audits like 10-Ks.

IEEE standardizes XAI by Q2 2025. VCs invest $500 million USD in explainable tools, per PitchBook.

Artists turn AI into transparent media. Galleries exhibit boldly. Visual culture grows on verified intent.